The recent chaos at OpenAI has brought to light some important contradictions in our understanding and approach towards artificial intelligence (AI). Many people tend to view AI as an entity that can cause harm on its own, but the reality is that it is the people who control and develop AI systems that we should be concerned about.

AI, at its core, is a tool that is created and programmed by humans. It is designed to perform specific tasks and make decisions based on the data it is fed. It is not inherently good or bad, but rather a reflection of the intentions and biases of its creators. This is why it is crucial to focus on the human element behind AI, as they have the power to shape its capabilities and influence its behavior.

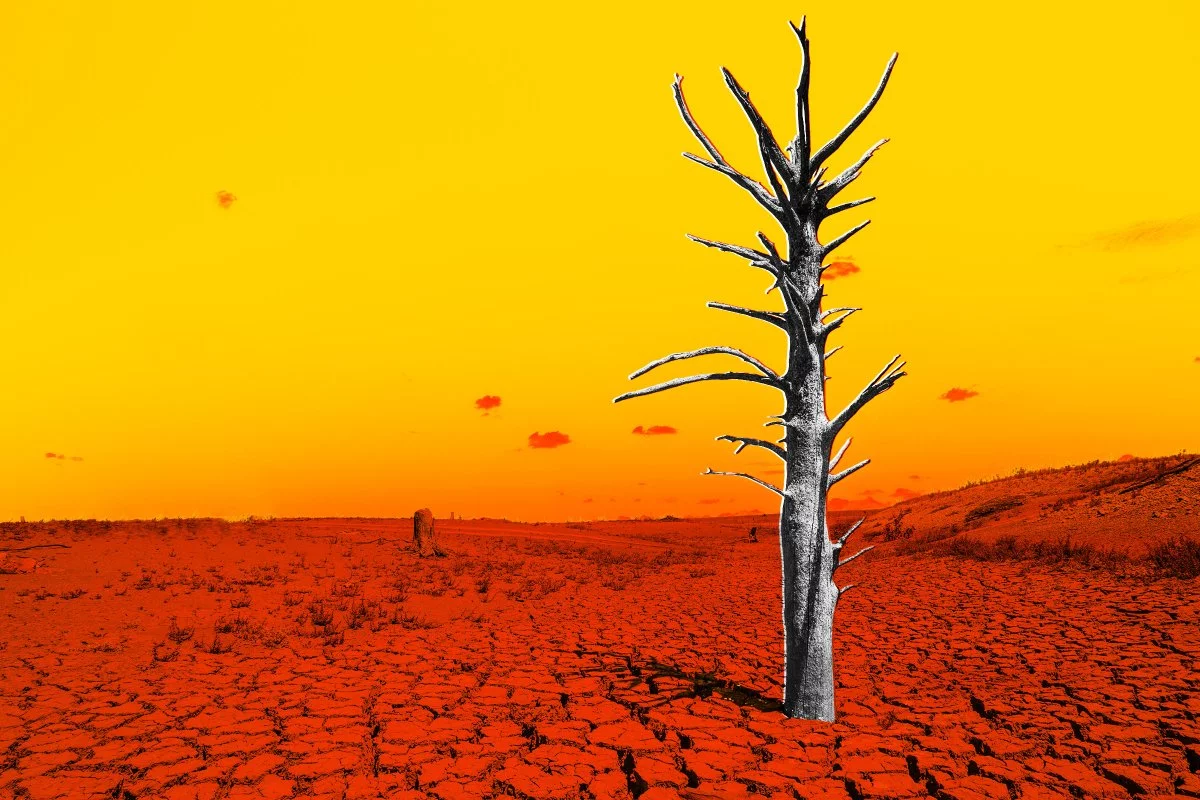

The chaos at OpenAI, an organization dedicated to the safe and beneficial development of AI, highlights the challenges and contradictions in our thinking. On one hand, we want AI to be advanced enough to solve complex problems and improve our lives. On the other hand, we fear the potential consequences of AI becoming too powerful or falling into the wrong hands. This tension underscores the need for responsible and ethical development of AI, with a focus on transparency, accountability, and inclusivity.

It is important to recognize that AI itself does not cause harm. It is the people who control and shape AI systems that we should worry about. As we continue to advance in AI technology, it is crucial that we prioritize the human element and ensure that AI is developed and used in a responsible and ethical manner. Only then can we fully harness the potential of AI while minimizing the risks it may pose.

[su_button url=”https://www.theguardian.com/commentisfree/2023/nov/26/artificial-intelligence-harm-worry-about-people-control-openai” target=”_blank” style=”ghost” background=”#010066″ color=”#010066″ size=”2″ wide=”no” center=”no” radius=”auto”]Read more at the Guardian[/su_button]

By

By

By

By

By

By

By

By

By

By

By

By