In an exciting breakthrough, researchers have developed a revolutionary wearable interface called EchoSpeech that is capable of recognizing silent speech. The device uses acoustic-sensing and AI technology to track lip and mouth movements in order to interpret what the user is saying without vocalizing sound. This could potentially be life-changing for those who are unable to communicate verbally due to physical or mental disabilities, as it will give them a new way of expressing themselves.

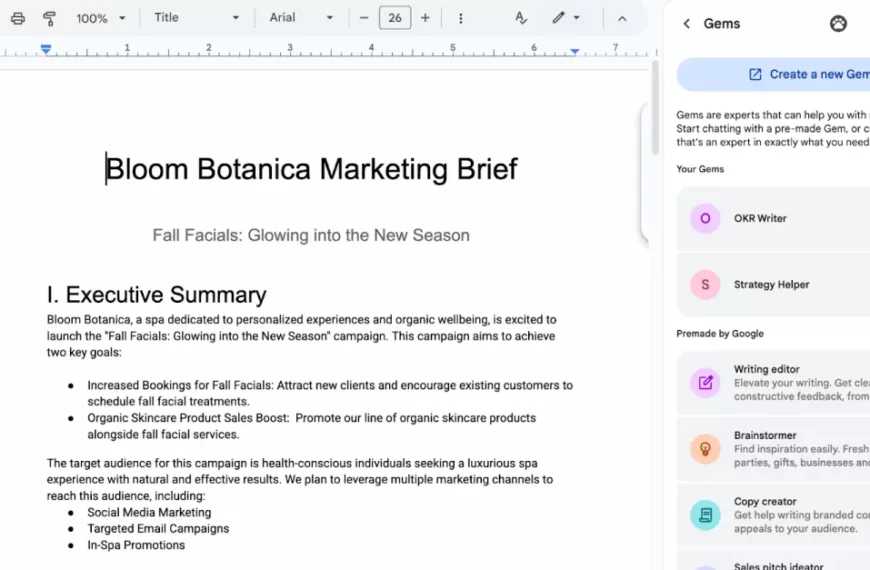

The system requires minimal training on the part of users and can recognize up to 31 unvocalized commands with accuracy rates above 90%. It works by using sensors embedded in eyeglasses, which detect subtle changes in facial expressions caused by speaking silently. These signals are then analyzed through AI algorithms, which convert them into words or phrases that can be understood by others around you.

This remarkable invention has opened up many possibilities for people who previously had limited communication options available to them, allowing them greater freedom when interacting with their environment while also providing more privacy than traditional methods, such as sign language or writing out messages on paper would allow for. Furthermore, this technology could even help bridge gaps between individuals from different cultures since it does not rely on shared languages as verbal communication does; instead relying solely on body language recognition algorithms that everyone understands regardless of background knowledge about language usage rules, etc…

Read more at Neuroscience News

By

By

By

By

By

By

By

By

By

By